With the continuous adoption of technology by businesses, the volume and sources from which logs are generated increase at an exponential rate. This makes it challenging to effectively manage logs across diverse systems and applications without a centralized approach. To address these challenges, many organizations turn to log management solutions like Fluentd.

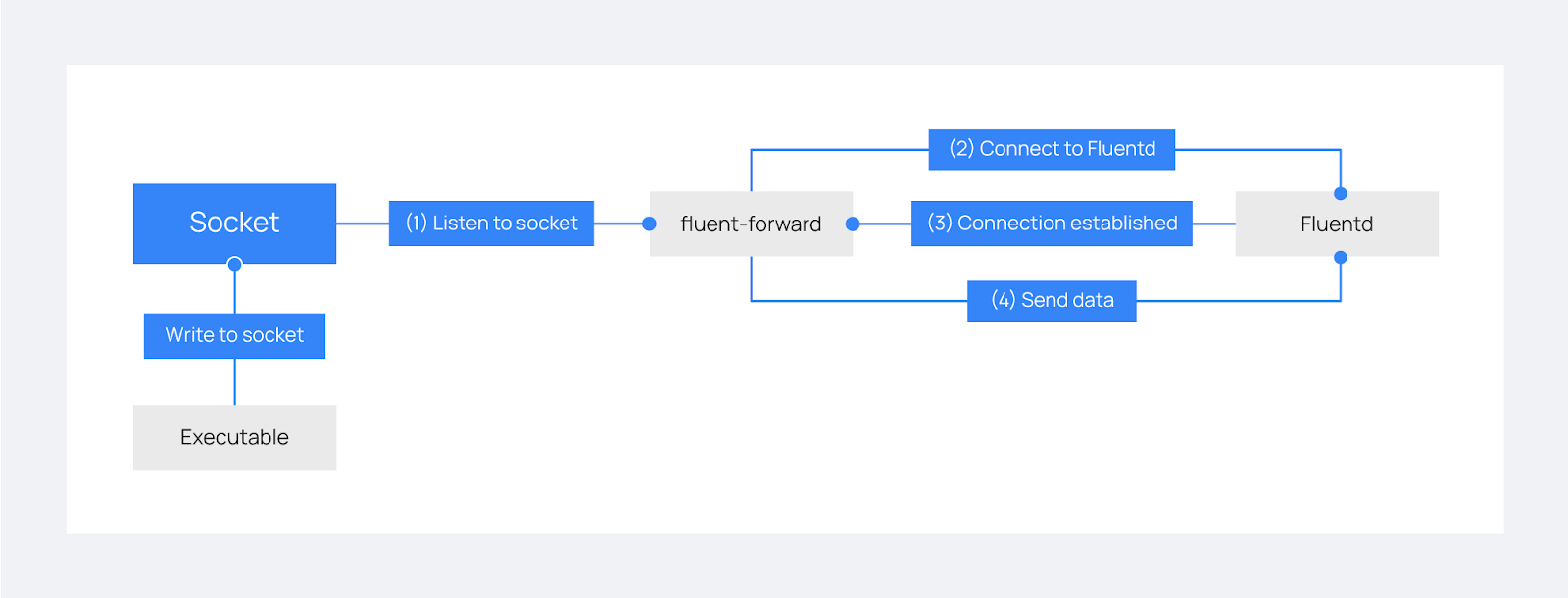

Fluentd is an open source data collector designed to unify the collection and aggregation of logs from various sources. Wazuh integrates with Fluentd using the Wazuh Fluentd forwarder module, which allows the forwarding of events to a Fluentd server. The image below shows how this module operates.

This blog post demonstrates how to forward Wazuh logs to Fluentd by leveraging the Wazuh Fluentd forwarder module to enhance log management. The logs are then forwarded to Hadoop, used as a data lake for big data workloads.

Infrastructure

We set up the following infrastructure to demonstrate the Wazuh integration with Fluentd:

- A pre-built ready-to-use Wazuh OVA 4.7.3. Follow this guide to download the virtual machine. This endpoint hosts the Wazuh central components, (Wazuh server, Wazuh indexer, and Wazuh dashboard).

- An Ubuntu 22.04 endpoint which will serve as a log management server. We will install Fluentd to handle log collection and forwarding to Hadoop, which will serve as the final destination for the collected logs.

Configuration

Ubuntu endpoint

Installing and configuring Fluentd

Fluentd provides several ways of installation. Depending on the chosen option, the location of its configuration file may vary. The configuration file dictates how Fluentd behaves, which plugins it uses, and how it processes data.

- If the installation is done using the

td-agentpackages, the configuration file will be located at/etc/td-agent/td-agent.conf. By default, td-agent writes its operation logs to the/var/log/td-agent/td-agent.logfile. - If the installation is done using the

fluent-package, you can find a default configuration file at/etc/fluent/fluentd.conf. By default, Fluentd writes its operation logs to the/var/log/fluent/fluentd.logfile. - If installation was done using the

calyptia-fluentd, you can find a default configuration file at/etc/calyptia-fluentd/calyptia-fluentd.conf. By default, calyptia-fluentd writes its operation logs to the/var/log/calyptia-fluentd/calyptia-fluentd.logfile.

A detailed Fluentd file configuration syntax guide is available in the Fluentd official documentation.

Follow the steps below to install Fluentd on your Ubuntu endpoint:

Note: You need root user privileges to execute the commands below.

1. Run the following command to download and install Fluentd using the Fluentd-package installation:

# curl -fsSL https://toolbelt.treasuredata.com/sh/install-ubuntu-jammy-fluent-package5-lts.sh | sh

2. Append the following to the Fluentd configuration file /etc/fluent/fluentd.conf to configure forwarding logs to Hadoop:

<match wazuh>

@type copy

<store>

@type webhdfs

host localhost

port 9870

append yes

path "/Wazuh/%Y%m%d/alerts.json"

<buffer>

flush_mode immediate

</buffer>

<format>

@type json

</format>

</store>

<store>

@type stdout

</store>

</match>

3. Start the Fluentd service:

# systemctl start fluentd

Installing and configuring Hadoop

Hadoop is an open source software designed for reliable, scalable, distributed computing that is widely used as the data lake for AI projects. One of its key features is the Hadoop distributed file system (HDFS) as it provides high-throughput access to application data, which is a requirement for big data workloads.

Follow the steps below to install Hadoop on the Ubuntu endpoint:

Note: You need root user privileges to execute the commands below.

1. Run the following commands to install the prerequisites:

# apt update && apt upgrade -y # apt install openssh-server openssh-client -y # apt install openjdk-11-jdk -y

2. Create a non-root user for Hadoop:

# adduser hdoop # usermod -aG sudo hdoop

3. Switch to the hdoop user:

# su hdoop

4. Setup SSH-keys for the hdoop user to be able to ssh into localhost on the hadoop server without being prompted for a password:

# ssh-keygen -t rsa -P '' -f ~/.ssh/id_rsa # cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

5. Change the SSH-keys permissions:

# chmod 600 ~/.ssh/authorized_keys # chmod 700 ~/.ssh

6. Run the following commands to download and install Hadoop:

# sudo wget https://downloads.apache.org/hadoop/common/hadoop-3.3.6/hadoop-3.3.6.tar.gz # sudo tar xzf hadoop-3.3.6.tar.gz # sudo mv hadoop-3.3.6 /usr/local/hadoop # sudo chown -R hdoop:hdoop /usr/local/hadoop

7. Configure the Hadoop environment variables by defining the Java home directory:

# echo 'export JAVA_HOME=$(readlink -f /usr/bin/java | sed "s:bin/java::")' | sudo tee -a /usr/local/hadoop/etc/hadoop/hadoop-env.sh

8. Edit the Hadoop XML configuration file /usr/local/hadoop/etc/hadoop/core-site.xml to add the following lines:

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://localhost:9000</value>

</property>

</configuration>

9. Initialize the Hadoop filesystem namespace:

# /usr/local/hadoop/bin/hdfs namenode -format

10. Launch HDFS and YARN services:

# /usr/local/hadoop/sbin/start-dfs.sh # /usr/local/hadoop/sbin/start-yarn.sh

You can now access the Hadoop NameNode and ResourceManager interfaces via a web browser using the following URLs:

- Namenode: http://localhost:9870

- ResourceManager: http://localhost:8088

11. Create a folder in your HDFS to store Wazuh alerts:

# /usr/local/hadoop/bin/hadoop fs -mkdir /Wazuh # /usr/local/hadoop/bin/hadoop fs -chmod -R 777 /Wazuh

12. Enable append operations in HDFS, which are disabled by default, by inserting the following settings within the <configuration> block of the /usr/local/hadoop/etc/hadoop/hdfs-site.xml file:

<property>

<name>dfs.webhdfs.enabled</name>

<value>true</value>

</property>

<property>

<name>dfs.support.append</name>

<value>true</value>

</property>

<property>

<name>dfs.support.broken.append</name>

<value>true</value>

</property>

13. Restart Hadoop to apply the changes:

# /usr/local/hadoop/sbin/stop-all.sh # /usr/local/hadoop/sbin/start-all.sh

Wazuh server

This section explains how to configure the Wazuh Fluentd forwarder module to send events to a Fluentd server. This is done by adding the <fluent-forward> block in the /var/ossec/etc/ossec.conf file on the Wazuh server.

You can specify various parameters to customize the behavior of the module, such as the UDP socket path, Fluentd server address, shared key for authentication, and more. To learn about the configurable parameters within this block, refer to the Wazuh documentation on configuring the Fluentd forwarder module.

Follow the steps below to configure the Wazuh server:

Note: You need root user privileges to execute the commands below.

1. Append the following settings to the /var/ossec/etc/ossec.conf configuration file:

<ossec_config>

<socket>

<name>fluent_socket</name>

<location>/var/run/fluent.sock</location>

<mode>udp</mode>

</socket>

<localfile>

<log_format>json</log_format>

<location>/var/ossec/logs/alerts/alerts.json</location>

<target>fluent_socket</target>

</localfile>

<fluent-forward>

<enabled>yes</enabled>

<tag>wazuh</tag>

<socket_path>/var/run/fluent.sock</socket_path>

<address><FLUENTD_IP></address>

<port>24224</port>

</fluent-forward>

</ossec_config>

The configuration above defines 3 blocks:

- The

<socket>block defines a socket that the Fluentd forwarder will listen to. It is created at runtime in the designated path. - The

<localfile>block defines the input to send to the Fluentd forwarder. - The

<fluent-forward>block configures the Fluentd forwarder to listen from the socket and connect to the fluent server. Replace the<FLUENTD_IP>variable with the IP address of the Fluentd server.

Note: The Fluentd forwarder module also has a secure mode that uses CA certificates to verify the authenticity of the Fluentd server. Refer to the Wazuh documentation for detailed configuration options.

2. Restart the Wazuh manager to apply the changes.

# systemctl restart wazuh-manager

Testing the integration

To test the integration, you can check the following steps:

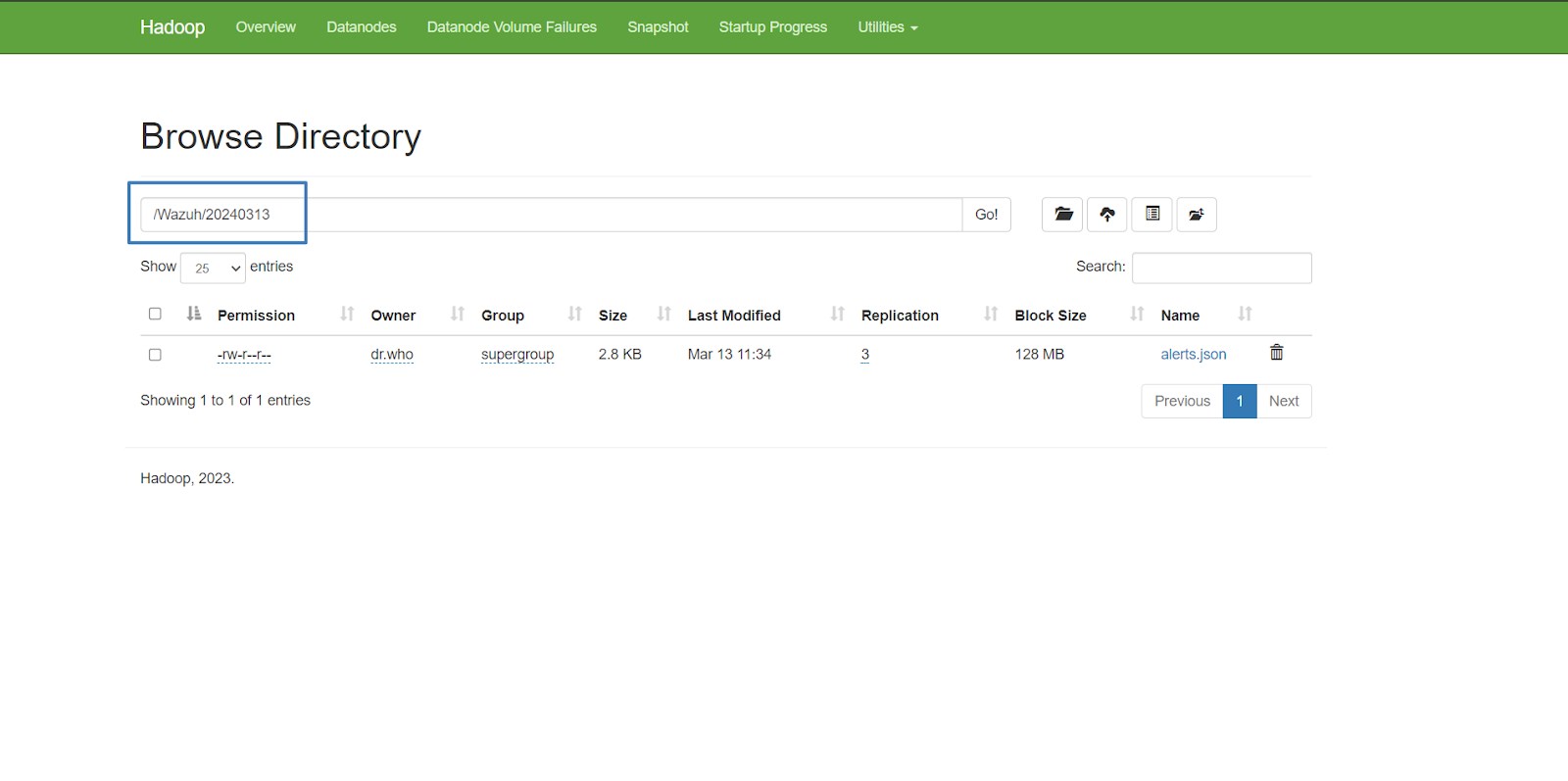

1. Check the Hadoop path /Wazuh/<DATE>/alerts.json replacing <DATE> with the date in yyyymmdd format to view the stored Wazuh alerts. For example:

# /usr/local/hadoop/bin/hadoop fs -tail /Wazuh/20240313/alerts.json

Note: It might take some time before the Wazuh server establishes a connection and starts pushing alerts to Fluentd. Only new alerts in the /var/ossec/logs/alerts/alerts.json will be pushed to Fluentd so you may need to simulate an event to generate alerts.

:::* 4071/rpcbind\\ntcp 0.0.0.0:1514 0.0.0.0:* 27940/wazuh-remoted\\ntcp 0.0.0.0:1515 0.0.0.0:* 27859/wazuh-authd\\ntcp6 127.0.0.1:9200 :::* 5616/java\\ntcp6 127.0.0.1:9300 :::* 5616/java\",\"decoder\":{\"name\":\"ossec\"},\"previous_log\":\"ossec: output: 'netstat listening ports':\\ntcp 0.0.0.0:22 0.0.0.0:* 5622/sshd\\ntcp6 :::22 :::* 5622/sshd\\ntcp 127.0.0.1:25 0.0.0.0:* 5605/master\\nudp 0.0.0.0:68 0.0.0.0:* 5397/dhclient\\ntcp 0.0.0.0:111 0.0.0.0:* 4071/rpcbind\\ntcp6 :::111 :::* 4071/rpcbind\\nudp 0.0.0.0:111 0.0.0.0:* 4071/rpcbind\\nudp6 :::111 :::* 4071/rpcbind\\nudp 127.0.0.1:323 0.0.0.0:* 4080/chronyd\\nudp6 ::1:323 :::* 4080/chronyd\\ntcp 0.0.0.0:443 0.0.0.0:* 4090/node\\nudp 0.0.0.0:842 0.0.0.0:* 4071/rpcbind\\nudp6 :::842 :::* 4071/rpcbind\\ntcp 0.0.0.0:1514 0.0.0.0:* 26485/wazuh-remoted\\ntcp 0.0.0.0:1515 0.0.0.0:* 26402/wazuh-authd\\ntcp6 127.0.0.1:9200 :::* 5616/java\\ntcp6 127.0.0.1:9300 :::* 5616/java\\ntcp 0.0.0.0:55000 0.0.0.0:* 26353/python3\",\"location\":\"netstat listening ports\"}"}

2. Alternatively, you can view the logs by connecting to the Hadoop namenode UI interface via the URL: http://localhost:9870. For this click on Utilities > Browse the file system.

Conclusion

In this post, we explored how integrating Wazuh with Fluentd can enhance your log management capabilities. By forwarding Wazuh alerts to Hadoop, you can take advantage of big-data analytics and machine learning workflows for advanced data processing and insights.

References